Signing Off AI?

Sleeping Well?

Leaders responsible for AI systems that impact people's health, safety or rights are pivoting to a new category of AI Assurance technology.

Defence | Healthcare | Critical Infrastructure | Financial Services

Evolving regulations. Technical complexity. Constant flux. Multiple stakeholders.

AI Assurance is no easy job.

Lawmakers, users, and society rightly have high expectations of AI systems in regulated sectors. Their demands are impacting policies across security, risk, data, IT, procurement, and insurance.

Whilst consensus is building around AI Governance (the processes to follow), AI Assurance (tests and monitoring at the model & system level) has not kept pace.

AI Assurance is no easy job.

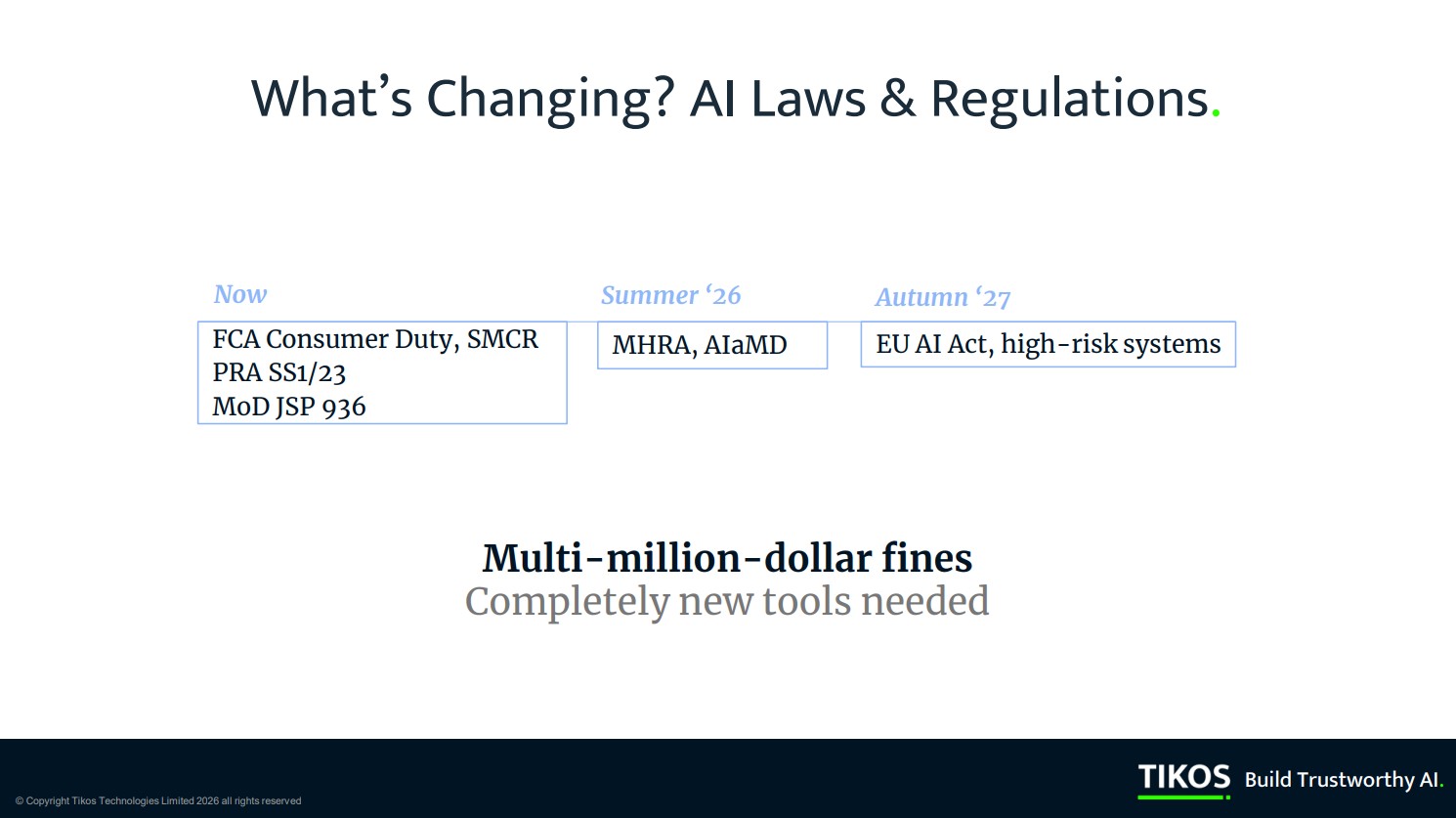

EU AI ACT, NIST AI RMF, GDPR Art. 22, Sectoral regulations (FCA, MHRA), ISO 42000

Head of Risk, GRC team, Chief Data Officer, CTO, AI/ML, Data, Tech team, Product manager, External AI Governance professionals, Certification bodies, Internal/External Auditors, Regulators.

Impact evaluation, Bias audit, Compliance audit, Certification, Conformity assessment, Performance testing, Formal verification.

Planning, Data preparation, Model development, System development, Evaluations, Validation and verification, Operation, monitoring and reporting.

Solved by TIKOS®

Use throughout the AI system lifecycle

TIKOS® Evaluate

TIKOS® Explain

TIKOS® Explore

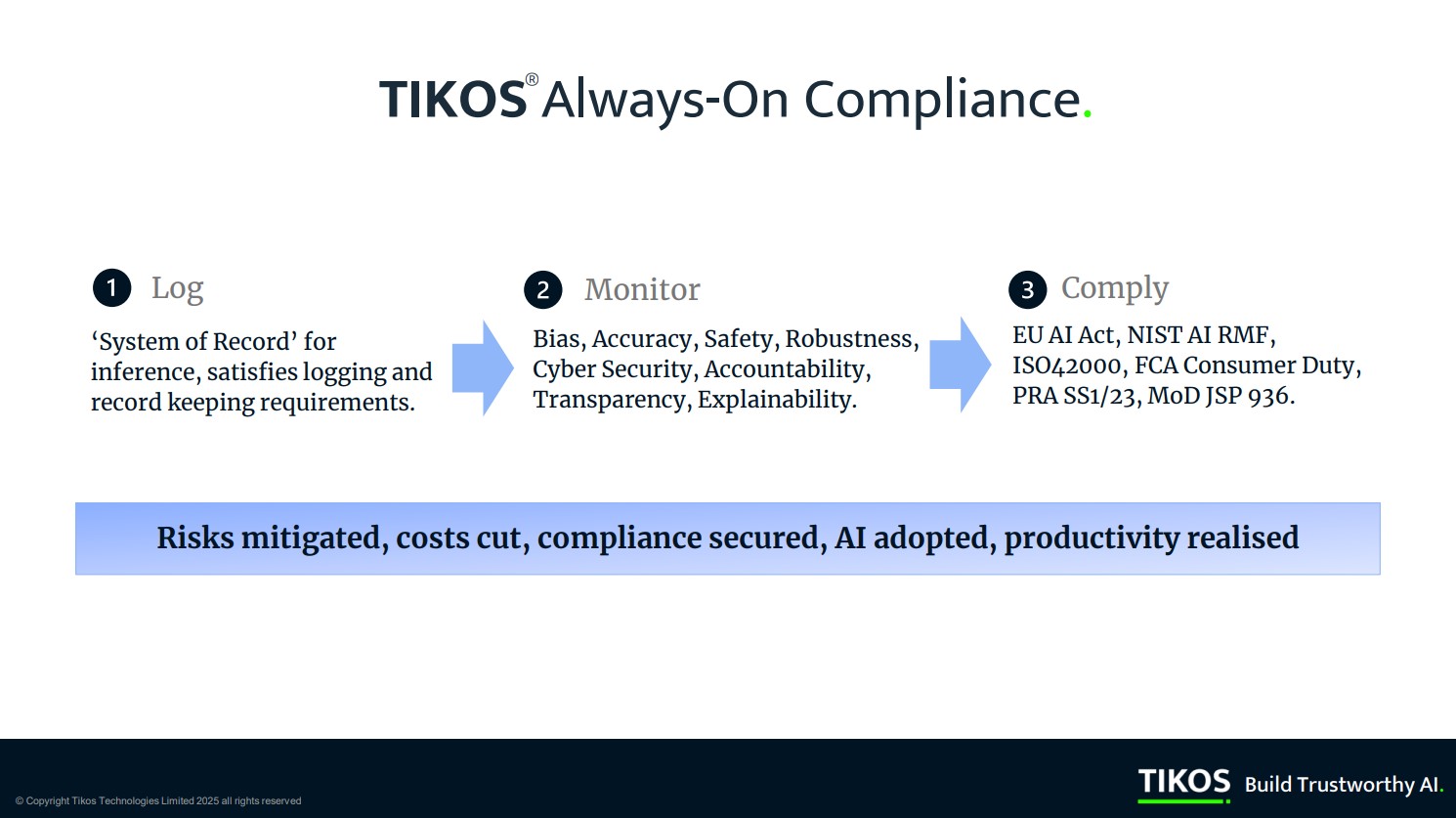

TIKOS™ addresses the core challenges for trustworthy AI development, deployment, procurement, and monitoring

Fair & Unbiased

Transparent & Explainable

Accurate, Safe & Robust

Accountable

Features

Regulations 1st approach

TIKOS™ is engineered to deliver AI Assurance in regulated, high-stakes environments for all dominant regulations and standards frameworks.

Proprietary technology

TIKOS™ is built from the ground up on original (PhD) research in AI transparency, reasoning and explainability.

Open architecture

TIKOS™ is agnostic to model class, developer framework, tooling and deployment infrastructure.

Expert-in-the-loop

TIKOS™ leverages organizational know-how through an ‘expert-in-the-loop’ system design.

Flexible deployment

TIKOS™ can be deployed through SaaS by APIs, SDK and platform access; including private cloud.